Replaces the previously separate repo, merging the Rust system into the mainline ready for 1.4. The Rust system currently provides: * lqos_bus: type definitions for localhost control bus. * lqos_config: handler for configuration files. * lqos_node_manager: local web-based monitor and manager. * lqos_sys: eBPF program that handles all of the tracking, CPU assignment, VLAN redirect, TC queue assignment, and RTT tracking. Wrapped in a Rust core, providing consistent in-Rust building and mostly-safe wrappers to C code. * lqosd: a daemon designed to run continually while LibreQoS is operating. On start, it parses the configuration files and sets up interface mapping (the Python code is still required to actually build queues). It then assigns the various eBPF programs to appropriate interfaces. The eBPF systems are removed when the daemon terminates. lqosd also provides a "bus", listening to requests for changes or information on localhost, providing a control plane for the rest of the project. * lqtop: An example program demonstrating how to use the bus, acts like "top", showing current network traffic and mappings. * xdp_iphash_to_cpu_cmdline: a Rust wrapper providing the same services as the cpumap originated tool of the same name. This is a "shim" - it will go away once the native Python library is ready. * xdp_pping: also a shim, providing equivalent services to the cpumap service of the same name. A helper shell script "remove_pinned_maps.sh" can be used to remove all pinned eBPF maps from the system, allowing for eBPF program upgrades that change persistent map structures without a reboot. Signed-off-by: Herbert Wolverson <herberticus@gmail.com> |

||

|---|---|---|

| .github | ||

| docs | ||

| old | ||

| sim | ||

| src | ||

| .gitignore | ||

| .gitmodules | ||

| LICENSE | ||

| README.md | ||

LibreQoS is a Quality of Experience (QoE) Smart Queue Management (SQM) system designed for Internet Service Providers to optimize the flow of their network traffic and thus reduce bufferbloat, keep the network responsive, and improve the end-user experience.

Servers running LibreQoS can shape traffic for many thousands of customers.

Learn more at LibreQoS.io!

Support LibreQoS

Please support the continued development of LibreQoS by visiting our GitHub Sponsors page.

Features

Flexible Hierarchical Shaping / Back-Haul Congestion Mitigation

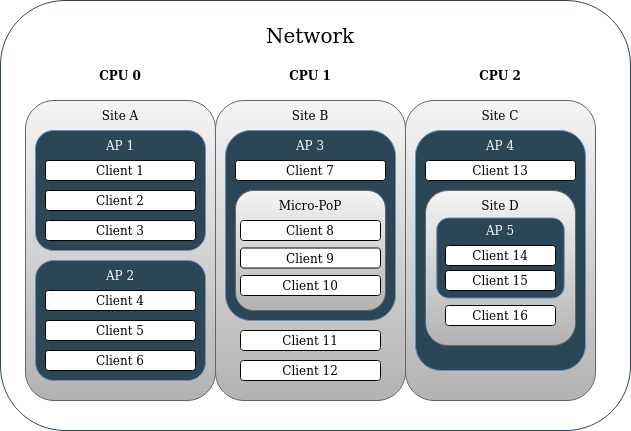

Starting in version v1.1+, operators can map their network hierarchy in LibreQoS. This enables both simple network hierarchies (Site>AP>Client) as well as much more complex ones (Site>Site>Micro-PoP>AP>Site>AP>Client). This can be used to ensure that a given site’s peak bandwidth will not exceed the capacity of its back-haul links (back-haul congestion control). Operators can support more users on the same network equipment with LibreQoS than with competing QoE solutions which only shape by AP and Client.

CAKE

CAKE is the product of nearly a decade of development efforts to improve on fq_codel. With the diffserv_4 parameter enabled – CAKE groups traffic in to Bulk, Best Effort, Video, and Voice. This means that without having to fine-tune traffic priorities as you would with DPI products – CAKE automatically ensures your clients’ OS update downloads will not disrupt their zoom calls. It allows for multiple video conferences to operate on the same connection which might otherwise “fight” for upload bandwidth causing call disruptions. It holds the connection together like glue. With work-from-home, remote learning, and tele-medicine becoming increasingly common – minimizing video call disruptions can save jobs, keep students engaged, and help ensure equitable access to medical care.

XDP

Fast, multi-CPU queueing leveraging xdp-cpumap-tc and cpumap-pping. Currently tested in the real world past 11 Gbps (so far) with just 30% CPU use on a 16 core Intel Xeon Gold 6254. It's likely capable of 30Gbps or more.

Graphing

You can graph bandwidth and TCP RTT by client and node (Site, AP, etc), with great visalizations made possible by InfluxDB.

CRM Integrations

- UISP

- Splynx

System Requirements

VM or physical server

- For VMs, NIC passthrough is required for optimal throughput and latency (XDP vs generic XDP). Using Virtio / bridging is much slower than NIC passthrough. Virtio / bridging should not be used for large amounts of traffic.

CPU

- 2 or more CPU cores

- A CPU with solid single-thread performance within your budget.

- For 10G+ throughput on a budget, consider the AMD Ryzen 9 5900X or Intel Core i7-12700KF

- CPU Core count required assuming single thread performance of 2700 or more:

| Throughput | CPU Cores |

|---|---|

| 500 Mbps | 2 |

| 1 Gbps | 4 |

| 5 Gbps | 8 |

| 10 Gbps | 12 |

| 20 Gbps* | 16 |

| 50 Gbps* | 32 |

| 100 Gbps* | 64 |

(* Estimated)

Memory

- Mimumum RAM = 2 + (0.002 x Subscriber Count) GB

- Recommended RAM:

| Subscribers | RAM |

|---|---|

| 100 | 4 GB |

| 1,000 | 8 GB |

| 5,000 | 16 GB |

| 10,000* | 32 GB |

| 50,000* | 48 GB |

(* Estimated)

Network Interface Requirements

- One management network interface completely separate from the traffic shaping interfaces. Usually this would be the Ethernet interface built in to the motherboard.

- Dedicated Network Interface Card for Shaping Interfaces

- NIC must have 2 or more interfaces for traffic shaping.

- NIC must have multiple TX/RX transmit queues. Here's how to check from the command line.

- Known supported cards:

- NVIDIA Mellanox MCX512A-ACAT

- Intel X710

- Intel X520