Compare commits

72 Commits

2022.1.0.d

...

2021.1

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

f557dca475 | ||

|

|

185fe44080 | ||

|

|

2a1f43a64a | ||

|

|

f19d1d16f0 | ||

|

|

d98beb796b | ||

|

|

915858198e | ||

|

|

8d4545e1b2 | ||

|

|

e45272c714 | ||

|

|

fe3dc7d176 | ||

|

|

9c297a3174 | ||

|

|

f9c692b885 | ||

|

|

bbce6f5b3a | ||

|

|

2395f9f120 | ||

|

|

c88f838dfa | ||

|

|

ce6ce23eec | ||

|

|

6a32854ec4 | ||

|

|

bece22ac67 | ||

|

|

76606ba2fc | ||

|

|

1c538af62f | ||

|

|

3a720d188b | ||

|

|

70f619b5eb | ||

|

|

0dbaf078d8 | ||

|

|

3c5fa6f4b8 | ||

|

|

31ccf354dc | ||

|

|

bf9b649cdf | ||

|

|

84518964ba | ||

|

|

0b4846cfcc | ||

|

|

950388d9e8 | ||

|

|

f828b16f40 | ||

|

|

261bd3de6b | ||

|

|

31b3e356ab | ||

|

|

607982e79c | ||

|

|

c083e5b146 | ||

|

|

444301a1d6 | ||

|

|

f56ba0daa9 | ||

|

|

cd101085d7 | ||

|

|

2c79f74579 | ||

|

|

d7463eb216 | ||

|

|

74b13a0f74 | ||

|

|

1c8188908e | ||

|

|

86e39a6775 | ||

|

|

2645421df6 | ||

|

|

9b1961502b | ||

|

|

2023a7cd81 | ||

|

|

105cd18d0b | ||

|

|

92d19291c8 | ||

|

|

191e9f7f72 | ||

|

|

126c2600bb | ||

|

|

b922800ae2 | ||

|

|

272b17f5d9 | ||

|

|

b89e7d69dd | ||

|

|

528e6f9328 | ||

|

|

ebf009d1a1 | ||

|

|

d604a03ac0 | ||

|

|

e7e82b9eb7 | ||

|

|

f5bd16990e | ||

|

|

488f2dd916 | ||

|

|

79853baf28 | ||

|

|

6c5e0cfaa4 | ||

|

|

d239b2584c | ||

|

|

28a733b771 | ||

|

|

7bba2a9542 | ||

|

|

9b7e22f49a | ||

|

|

a4dc5c89f3 | ||

|

|

fef1803a86 | ||

|

|

e94393df10 | ||

|

|

2e4f46e1fd | ||

|

|

177906b99a | ||

|

|

6d38488462 | ||

|

|

db5aa551af | ||

|

|

6d90eedbd2 | ||

|

|

a91e256d27 |

118

.ci/azure/linux.yml

Normal file

118

.ci/azure/linux.yml

Normal file

@@ -0,0 +1,118 @@

|

||||

jobs:

|

||||

- job: Lin

|

||||

# About 150% of total time

|

||||

timeoutInMinutes: 85

|

||||

pool:

|

||||

name: LIN_VMSS_VENV_F8S_WU2

|

||||

variables:

|

||||

system.debug: true

|

||||

WORKERS_NUMBER: 8

|

||||

BUILD_TYPE: Release

|

||||

REPO_DIR: $(Build.Repository.LocalPath)

|

||||

WORK_DIR: $(Pipeline.Workspace)/_w

|

||||

BUILD_DIR: $(WORK_DIR)/build

|

||||

BIN_DIR: $(REPO_DIR)/bin/intel64/$(BUILD_TYPE)

|

||||

steps:

|

||||

- checkout: self

|

||||

clean: true

|

||||

fetchDepth: 1

|

||||

lfs: false

|

||||

submodules: recursive

|

||||

path: openvino

|

||||

- script: |

|

||||

curl -H Metadata:true --noproxy "*" "http://169.254.169.254/metadata/instance?api-version=2019-06-01"

|

||||

whoami

|

||||

uname -a

|

||||

which python3

|

||||

python3 --version

|

||||

gcc --version

|

||||

lsb_release

|

||||

env

|

||||

cat /proc/cpuinfo

|

||||

cat /proc/meminfo

|

||||

vmstat -s

|

||||

df

|

||||

displayName: 'System info'

|

||||

- script: |

|

||||

rm -rf $(WORK_DIR) ; mkdir $(WORK_DIR)

|

||||

rm -rf $(BUILD_DIR) ; mkdir $(BUILD_DIR)

|

||||

displayName: 'Make dir'

|

||||

- script: |

|

||||

sudo apt --assume-yes install libusb-1.0-0-dev

|

||||

python3 -m pip install -r ./inference-engine/ie_bridges/python/requirements.txt

|

||||

# For running Python API tests

|

||||

python3 -m pip install -r ./inference-engine/ie_bridges/python/src/requirements-dev.txt

|

||||

displayName: 'Install dependencies'

|

||||

- script: |

|

||||

wget https://github.com/ninja-build/ninja/releases/download/v1.10.0/ninja-linux.zip

|

||||

unzip ninja-linux.zip

|

||||

sudo cp -v ninja /usr/local/bin/

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: 'Install Ninja'

|

||||

- task: CMake@1

|

||||

inputs:

|

||||

# CMake must get Python 3.x version by default

|

||||

cmakeArgs: -GNinja -DVERBOSE_BUILD=ON -DCMAKE_BUILD_TYPE=$(BUILD_TYPE) -DENABLE_PYTHON=ON -DPYTHON_EXECUTABLE=/usr/bin/python3.6 -DENABLE_TESTS=ON $(REPO_DIR)

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

- script: ninja

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

displayName: 'Build Lin'

|

||||

- script: ls -alR $(REPO_DIR)/bin/

|

||||

displayName: 'List files'

|

||||

- script: $(BIN_DIR)/unit-test --gtest_print_time=1 --gtest_filter=-backend_api.config_unsupported:*IE_GPU*

|

||||

displayName: 'nGraph UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/InferenceEngineUnitTests --gtest_print_time=1

|

||||

displayName: 'IE UT old'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/ieUnitTests

|

||||

displayName: 'IE UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/cpuUnitTests

|

||||

displayName: 'CPU UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/gnaUnitTests

|

||||

displayName: 'GNA UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/vpuUnitTests

|

||||

displayName: 'VPU UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/ieFuncTests

|

||||

displayName: 'IE FuncTests'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/cpuFuncTests --gtest_print_time=1

|

||||

displayName: 'CPU FuncTests'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/MklDnnBehaviorTests

|

||||

displayName: 'MklDnnBehaviorTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

git clone https://github.com/openvinotoolkit/testdata.git

|

||||

git clone https://github.com/google/gtest-parallel.git

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: 'Clone testdata & gtest-parallel'

|

||||

- script: |

|

||||

export DATA_PATH=$(WORK_DIR)/testdata

|

||||

export MODELS_PATH=$(WORK_DIR)/testdata

|

||||

python3 $(WORK_DIR)/gtest-parallel/gtest-parallel $(BIN_DIR)/MklDnnFunctionalTests --workers=$(WORKERS_NUMBER) --print_test_times --dump_json_test_results=MklDnnFunctionalTests.json -- --gtest_print_time=1

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: 'MklDnnFunctionalTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

export DATA_PATH=$(WORK_DIR)/testdata

|

||||

export MODELS_PATH=$(WORK_DIR)/testdata

|

||||

$(BIN_DIR)/InferenceEngineCAPITests

|

||||

displayName: 'IE CAPITests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

export DATA_PATH=$(WORK_DIR)/testdata

|

||||

export MODELS_PATH=$(WORK_DIR)/testdata

|

||||

export LD_LIBRARY_PATH=$(BIN_DIR)/lib

|

||||

export PYTHONPATH=$(BIN_DIR)/lib/python_api/python3.6

|

||||

env

|

||||

cd $(REPO_DIR)/inference-engine/ie_bridges/python/tests

|

||||

pytest

|

||||

displayName: 'Python API Tests'

|

||||

continueOnError: false

|

||||

enabled: false

|

||||

|

||||

102

.ci/azure/mac.yml

Normal file

102

.ci/azure/mac.yml

Normal file

@@ -0,0 +1,102 @@

|

||||

jobs:

|

||||

- job: Mac

|

||||

# About 200% of total time (perfomace of Mac hosts is unstable)

|

||||

timeoutInMinutes: 180

|

||||

pool:

|

||||

vmImage: 'macOS-10.15'

|

||||

variables:

|

||||

system.debug: true

|

||||

WORKERS_NUMBER: 3

|

||||

BUILD_TYPE: Release

|

||||

REPO_DIR: $(Build.Repository.LocalPath)

|

||||

WORK_DIR: $(Pipeline.Workspace)/_w

|

||||

BUILD_DIR: $(WORK_DIR)/build

|

||||

BIN_DIR: $(REPO_DIR)/bin/intel64/$(BUILD_TYPE)

|

||||

steps:

|

||||

- checkout: self

|

||||

clean: true

|

||||

fetchDepth: 1

|

||||

lfs: false

|

||||

submodules: recursive

|

||||

path: openvino

|

||||

- script: |

|

||||

whoami

|

||||

uname -a

|

||||

which python3

|

||||

python3 --version

|

||||

gcc --version

|

||||

xcrun --sdk macosx --show-sdk-version

|

||||

env

|

||||

sysctl -a

|

||||

displayName: 'System info'

|

||||

- script: |

|

||||

rm -rf $(WORK_DIR) ; mkdir $(WORK_DIR)

|

||||

rm -rf $(BUILD_DIR) ; mkdir $(BUILD_DIR)

|

||||

displayName: 'Make dir'

|

||||

- task: UsePythonVersion@0

|

||||

inputs:

|

||||

versionSpec: '3.7'

|

||||

- script: |

|

||||

brew install cython

|

||||

brew install automake

|

||||

displayName: 'Install dependencies'

|

||||

- script: brew install ninja

|

||||

displayName: 'Install Ninja'

|

||||

- script: |

|

||||

export PATH="/usr/local/opt/cython/bin:$PATH"

|

||||

export CC=gcc

|

||||

export CXX=g++

|

||||

# Disable errors with Ninja

|

||||

export CXXFLAGS="-Wno-error=unused-command-line-argument"

|

||||

export CFLAGS="-Wno-error=unused-command-line-argument"

|

||||

cmake -GNinja -DVERBOSE_BUILD=ON -DCMAKE_BUILD_TYPE=$(BUILD_TYPE) -DENABLE_PYTHON=ON -DENABLE_TESTS=ON $(REPO_DIR)

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

displayName: 'CMake'

|

||||

- script: ninja

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

displayName: 'Build Mac'

|

||||

- script: ls -alR $(REPO_DIR)/bin/

|

||||

displayName: 'List files'

|

||||

- script: $(BIN_DIR)/unit-test --gtest_print_time=1 --gtest_filter=-backend_api.config_unsupported:*IE_GPU*:IE_CPU.onnx_model_sigmoid

|

||||

displayName: 'nGraph UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/InferenceEngineUnitTests --gtest_print_time=1

|

||||

displayName: 'IE UT old'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/ieUnitTests

|

||||

displayName: 'IE UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/cpuUnitTests

|

||||

displayName: 'CPU UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/vpuUnitTests

|

||||

displayName: 'VPU UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/ieFuncTests

|

||||

displayName: 'IE FuncTests'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/cpuFuncTests --gtest_print_time=1

|

||||

displayName: 'CPU FuncTests'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/MklDnnBehaviorTests

|

||||

displayName: 'MklDnnBehaviorTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

git clone https://github.com/openvinotoolkit/testdata.git

|

||||

git clone https://github.com/google/gtest-parallel.git

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: 'Clone testdata & gtest-parallel'

|

||||

- script: |

|

||||

export DATA_PATH=$(WORK_DIR)/testdata

|

||||

export MODELS_PATH=$(WORK_DIR)/testdata

|

||||

python3 $(WORK_DIR)/gtest-parallel/gtest-parallel $(BIN_DIR)/MklDnnFunctionalTests --workers=$(WORKERS_NUMBER) --print_test_times --dump_json_test_results=MklDnnFunctionalTests.json --gtest_filter=-smoke_MobileNet/ModelTransformationsTest.LPT/mobilenet_v2_tf_depthwise_batch1_inPluginDisabled_inTestDisabled_asymmetric* -- --gtest_print_time=1

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: 'MklDnnFunctionalTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

export DATA_PATH=$(WORK_DIR)/testdata

|

||||

export MODELS_PATH=$(WORK_DIR)/testdata

|

||||

$(BIN_DIR)/InferenceEngineCAPITests

|

||||

displayName: 'IE CAPITests'

|

||||

continueOnError: false

|

||||

|

||||

133

.ci/azure/windows.yml

Normal file

133

.ci/azure/windows.yml

Normal file

@@ -0,0 +1,133 @@

|

||||

jobs:

|

||||

- job: Win

|

||||

# About 150% of total time

|

||||

timeoutInMinutes: 120

|

||||

pool:

|

||||

name: WIN_VMSS_VENV_F8S_WU2

|

||||

variables:

|

||||

system.debug: true

|

||||

WORKERS_NUMBER: 8

|

||||

BUILD_TYPE: Release

|

||||

REPO_DIR: $(Build.Repository.LocalPath)

|

||||

WORK_DIR: $(Pipeline.Workspace)\_w

|

||||

BUILD_DIR: D:\build

|

||||

BIN_DIR: $(REPO_DIR)\bin\intel64

|

||||

MSVS_VARS_PATH: C:\Program Files (x86)\Microsoft Visual Studio\2019\Enterprise\VC\Auxiliary\Build\vcvars64.bat

|

||||

MSVC_COMPILER_PATH: C:\Program Files (x86)\Microsoft Visual Studio\2019\Enterprise\VC\Tools\MSVC\14.24.28314\bin\Hostx64\x64\cl.exe

|

||||

steps:

|

||||

- checkout: self

|

||||

clean: true

|

||||

fetchDepth: 1

|

||||

lfs: false

|

||||

submodules: recursive

|

||||

path: openvino

|

||||

- script: |

|

||||

powershell -command "Invoke-RestMethod -Headers @{\"Metadata\"=\"true\"} -Method GET -Uri http://169.254.169.254/metadata/instance/compute?api-version=2019-06-01 | format-custom"

|

||||

where python3

|

||||

where python

|

||||

python --version

|

||||

wmic computersystem get TotalPhysicalMemory

|

||||

wmic cpu list

|

||||

wmic logicaldisk get description,name

|

||||

wmic VOLUME list

|

||||

set

|

||||

displayName: 'System info'

|

||||

- script: |

|

||||

rd /Q /S $(WORK_DIR) & mkdir $(WORK_DIR)

|

||||

rd /Q /S $(BUILD_DIR) & mkdir $(BUILD_DIR)

|

||||

displayName: 'Make dir'

|

||||

- script: |

|

||||

certutil -urlcache -split -f https://github.com/ninja-build/ninja/releases/download/v1.10.0/ninja-win.zip ninja-win.zip

|

||||

powershell -command "Expand-Archive -Force ninja-win.zip"

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: Install Ninja

|

||||

- script: |

|

||||

certutil -urlcache -split -f https://incredibuilddiag1wu2.blob.core.windows.net/incredibuild/IBSetupConsole_9_5_0.exe IBSetupConsole_9_5_0.exe

|

||||

call IBSetupConsole_9_5_0.exe /Install /Components=Agent,oneuse /Coordinator=11.1.0.4 /AGENT:OPENFIREWALL=ON /AGENT:AUTOSELECTPORTS=ON /ADDTOPATH=ON /AGENT:INSTALLADDINS=OFF

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: Install IncrediBuild

|

||||

- script: |

|

||||

echo Stop IncrediBuild_Agent && net stop IncrediBuild_Agent

|

||||

reg add HKEY_LOCAL_MACHINE\SOFTWARE\Wow6432Node\Xoreax\IncrediBuild\Builder /f /v LastEnabled /d 0 && echo Start IncrediBuild_Agent && net start IncrediBuild_Agent

|

||||

displayName: Start IncrediBuild

|

||||

- script: |

|

||||

set PATH=$(WORK_DIR)\ninja-win;%PATH%

|

||||

call "$(MSVS_VARS_PATH)" && cmake -GNinja -DCMAKE_BUILD_TYPE=$(BUILD_TYPE) -DENABLE_TESTS=ON -DCMAKE_C_COMPILER:PATH="$(MSVC_COMPILER_PATH)" -DCMAKE_CXX_COMPILER:PATH="$(MSVC_COMPILER_PATH)" $(REPO_DIR)

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

displayName: 'CMake'

|

||||

- script: |

|

||||

set PATH=$(WORK_DIR)\ninja-win;%PATH%

|

||||

call "$(MSVS_VARS_PATH)" && "C:\Program Files (x86)\IncrediBuild\BuildConsole.exe" /COMMAND="ninja" /MaxCPUS=40

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

displayName: 'Build Win'

|

||||

- script: echo Stop IncrediBuild_Agent && net stop IncrediBuild_Agent

|

||||

displayName: Stop IncrediBuild

|

||||

continueOnError: true

|

||||

- script: dir $(REPO_DIR)\bin\ /s /b

|

||||

displayName: 'List files'

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\unit-test --gtest_print_time=1 --gtest_filter=-backend_api.config_unsupported:*IE_GPU*

|

||||

displayName: 'nGraph UT'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\InferenceEngineUnitTests --gtest_print_time=1

|

||||

displayName: 'IE UT old'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\ieUnitTests

|

||||

displayName: 'IE UT'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\cpuUnitTests

|

||||

displayName: 'CPU UT'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\gnaUnitTests

|

||||

displayName: 'GNA UT'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\vpuUnitTests

|

||||

displayName: 'VPU UT'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\ieFuncTests

|

||||

displayName: 'IE FuncTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\cpuFuncTests --gtest_print_time=1

|

||||

displayName: 'CPU FuncTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\MklDnnBehaviorTests

|

||||

displayName: 'MklDnnBehaviorTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

git clone https://github.com/openvinotoolkit/testdata.git

|

||||

git clone https://github.com/google/gtest-parallel.git

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

displayName: 'Clone testdata & gtest-parallel'

|

||||

# Add for gtest-parallel, it hangs now (CVS-33386)

|

||||

#python $(BUILD_DIR)\gtest-parallel\gtest-parallel $(BIN_DIR)\MklDnnFunctionalTests --workers=$(WORKERS_NUMBER) --print_test_times --dump_json_test_results=MklDnnFunctionalTests.json -- --gtest_print_time=1

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;$(REPO_DIR)\inference-engine\temp\opencv_4.5.0\opencv\bin;%PATH%

|

||||

set DATA_PATH=$(BUILD_DIR)\testdata

|

||||

set MODELS_PATH=$(BUILD_DIR)\testdata

|

||||

$(BIN_DIR)\MklDnnFunctionalTests --gtest_print_time=1

|

||||

displayName: 'MklDnnFunctionalTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;$(REPO_DIR)\inference-engine\temp\opencv_4.5.0\opencv\bin;%PATH%

|

||||

set DATA_PATH=$(BUILD_DIR)\testdata

|

||||

set MODELS_PATH=$(BUILD_DIR)\testdata

|

||||

$(BIN_DIR)\InferenceEngineCAPITests

|

||||

displayName: 'IE CAPITests'

|

||||

continueOnError: false

|

||||

@@ -79,5 +79,5 @@ ENV NGRAPH_CPP_BUILD_PATH=/openvino/dist

|

||||

ENV LD_LIBRARY_PATH=/openvino/dist/lib

|

||||

ENV NGRAPH_ONNX_IMPORT_ENABLE=TRUE

|

||||

ENV PYTHONPATH=/openvino/bin/intel64/Release/lib/python_api/python3.8:${PYTHONPATH}

|

||||

RUN git clone --recursive https://github.com/pybind/pybind11.git

|

||||

RUN git clone --recursive https://github.com/pybind/pybind11.git -b v2.5.0 --depth 1

|

||||

CMD tox

|

||||

|

||||

@@ -8,17 +8,7 @@ cmake_policy(SET CMP0054 NEW)

|

||||

# it allows to install targets created outside of current projects

|

||||

# See https://blog.kitware.com/cmake-3-13-0-available-for-download/

|

||||

|

||||

if (APPLE)

|

||||

if(CMAKE_GENERATOR STREQUAL "Xcode")

|

||||

# due to https://gitlab.kitware.com/cmake/cmake/issues/14254

|

||||

cmake_minimum_required(VERSION 3.12.0 FATAL_ERROR)

|

||||

else()

|

||||

# due to https://cmake.org/cmake/help/v3.12/policy/CMP0068.html

|

||||

cmake_minimum_required(VERSION 3.9 FATAL_ERROR)

|

||||

endif()

|

||||

else()

|

||||

cmake_minimum_required(VERSION 3.7.2 FATAL_ERROR)

|

||||

endif()

|

||||

cmake_minimum_required(VERSION 3.13 FATAL_ERROR)

|

||||

|

||||

project(OpenVINO)

|

||||

|

||||

|

||||

1

Jenkinsfile

vendored

1

Jenkinsfile

vendored

@@ -6,5 +6,4 @@ properties([

|

||||

name: 'failFast')

|

||||

])

|

||||

])

|

||||

|

||||

dldtPipelineEntrypoint(this)

|

||||

|

||||

@@ -1,5 +1,5 @@

|

||||

# [OpenVINO™ Toolkit](https://01.org/openvinotoolkit) - Deep Learning Deployment Toolkit repository

|

||||

[](https://github.com/openvinotoolkit/openvino/releases/tag/2020.4.0)

|

||||

[](https://github.com/openvinotoolkit/openvino/releases/tag/2021.1)

|

||||

[](LICENSE)

|

||||

|

||||

This toolkit allows developers to deploy pre-trained deep learning models

|

||||

|

||||

@@ -1,351 +0,0 @@

|

||||

jobs:

|

||||

- job: Lin

|

||||

# About 150% of total time

|

||||

timeoutInMinutes: 85

|

||||

pool:

|

||||

name: LIN_VMSS_VENV_F8S_WU2

|

||||

variables:

|

||||

system.debug: true

|

||||

WORKERS_NUMBER: 8

|

||||

BUILD_TYPE: Release

|

||||

REPO_DIR: $(Build.Repository.LocalPath)

|

||||

WORK_DIR: $(Pipeline.Workspace)/_w

|

||||

BUILD_DIR: $(WORK_DIR)/build

|

||||

BIN_DIR: $(REPO_DIR)/bin/intel64/$(BUILD_TYPE)

|

||||

steps:

|

||||

- checkout: self

|

||||

clean: true

|

||||

fetchDepth: 1

|

||||

lfs: false

|

||||

submodules: recursive

|

||||

path: openvino

|

||||

- script: |

|

||||

curl -H Metadata:true --noproxy "*" "http://169.254.169.254/metadata/instance?api-version=2019-06-01"

|

||||

whoami

|

||||

uname -a

|

||||

which python3

|

||||

python3 --version

|

||||

gcc --version

|

||||

lsb_release

|

||||

env

|

||||

cat /proc/cpuinfo

|

||||

cat /proc/meminfo

|

||||

vmstat -s

|

||||

df

|

||||

displayName: 'System properties'

|

||||

- script: |

|

||||

rm -rf $(WORK_DIR) ; mkdir $(WORK_DIR)

|

||||

rm -rf $(BUILD_DIR) ; mkdir $(BUILD_DIR)

|

||||

displayName: 'Make dir'

|

||||

- script: |

|

||||

sudo apt --assume-yes install libusb-1.0-0-dev

|

||||

python3 -m pip install -r ./inference-engine/ie_bridges/python/requirements.txt

|

||||

# For running Python API tests

|

||||

python3 -m pip install -r ./inference-engine/ie_bridges/python/src/requirements-dev.txt

|

||||

displayName: 'Install dependencies'

|

||||

- script: |

|

||||

wget https://github.com/ninja-build/ninja/releases/download/v1.10.0/ninja-linux.zip

|

||||

unzip ninja-linux.zip

|

||||

sudo cp -v ninja /usr/local/bin/

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: 'Install Ninja'

|

||||

- task: CMake@1

|

||||

inputs:

|

||||

# CMake must get Python 3.x version by default

|

||||

cmakeArgs: -GNinja -DVERBOSE_BUILD=ON -DCMAKE_BUILD_TYPE=$(BUILD_TYPE) -DENABLE_PYTHON=ON -DPYTHON_EXECUTABLE=/usr/bin/python3.6 -DENABLE_TESTS=ON $(REPO_DIR)

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

- script: ninja

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

displayName: 'Build Lin'

|

||||

- script: ls -alR $(REPO_DIR)/bin/

|

||||

displayName: 'List files'

|

||||

- script: $(BIN_DIR)/unit-test --gtest_print_time=1 --gtest_filter=-backend_api.config_unsupported:*IE_GPU*

|

||||

displayName: 'nGraph UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/InferenceEngineUnitTests --gtest_print_time=1

|

||||

displayName: 'IE UT old'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/ieUnitTests

|

||||

displayName: 'IE UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/cpuUnitTests

|

||||

displayName: 'CPU UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/gnaUnitTests

|

||||

displayName: 'GNA UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/vpuUnitTests

|

||||

displayName: 'VPU UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/ieFuncTests

|

||||

displayName: 'IE FuncTests'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/cpuFuncTests --gtest_print_time=1

|

||||

displayName: 'CPU FuncTests'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/MklDnnBehaviorTests

|

||||

displayName: 'MklDnnBehaviorTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

git clone https://github.com/openvinotoolkit/testdata.git

|

||||

git clone https://github.com/google/gtest-parallel.git

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: 'Clone testdata & gtest-parallel'

|

||||

- script: |

|

||||

export DATA_PATH=$(WORK_DIR)/testdata

|

||||

export MODELS_PATH=$(WORK_DIR)/testdata

|

||||

python3 $(WORK_DIR)/gtest-parallel/gtest-parallel $(BIN_DIR)/MklDnnFunctionalTests --workers=$(WORKERS_NUMBER) --print_test_times --dump_json_test_results=MklDnnFunctionalTests.json -- --gtest_print_time=1

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: 'MklDnnFunctionalTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

export DATA_PATH=$(WORK_DIR)/testdata

|

||||

export MODELS_PATH=$(WORK_DIR)/testdata

|

||||

$(BIN_DIR)/InferenceEngineCAPITests

|

||||

displayName: 'IE CAPITests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

export DATA_PATH=$(WORK_DIR)/testdata

|

||||

export MODELS_PATH=$(WORK_DIR)/testdata

|

||||

export LD_LIBRARY_PATH=$(BIN_DIR)/lib

|

||||

export PYTHONPATH=$(BIN_DIR)/lib/python_api/python3.6

|

||||

env

|

||||

cd $(REPO_DIR)/inference-engine/ie_bridges/python/tests

|

||||

pytest

|

||||

displayName: 'Python API Tests'

|

||||

continueOnError: false

|

||||

enabled: false

|

||||

|

||||

- job: Mac

|

||||

# About 200% of total time (perfomace of Mac hosts is unstable)

|

||||

timeoutInMinutes: 180

|

||||

pool:

|

||||

vmImage: 'macOS-10.15'

|

||||

variables:

|

||||

system.debug: true

|

||||

WORKERS_NUMBER: 3

|

||||

BUILD_TYPE: Release

|

||||

REPO_DIR: $(Build.Repository.LocalPath)

|

||||

WORK_DIR: $(Pipeline.Workspace)/_w

|

||||

BUILD_DIR: $(WORK_DIR)/build

|

||||

BIN_DIR: $(REPO_DIR)/bin/intel64/$(BUILD_TYPE)

|

||||

steps:

|

||||

- checkout: self

|

||||

clean: true

|

||||

fetchDepth: 1

|

||||

lfs: false

|

||||

submodules: recursive

|

||||

path: openvino

|

||||

- script: |

|

||||

whoami

|

||||

uname -a

|

||||

which python3

|

||||

python3 --version

|

||||

gcc --version

|

||||

xcrun --sdk macosx --show-sdk-version

|

||||

env

|

||||

sysctl -a

|

||||

displayName: 'System properties'

|

||||

- script: |

|

||||

rm -rf $(WORK_DIR) ; mkdir $(WORK_DIR)

|

||||

rm -rf $(BUILD_DIR) ; mkdir $(BUILD_DIR)

|

||||

displayName: 'Make dir'

|

||||

- task: UsePythonVersion@0

|

||||

inputs:

|

||||

versionSpec: '3.7'

|

||||

- script: |

|

||||

brew install cython

|

||||

brew install automake

|

||||

displayName: 'Install dependencies'

|

||||

- script: brew install ninja

|

||||

displayName: 'Install Ninja'

|

||||

- script: |

|

||||

export PATH="/usr/local/opt/cython/bin:$PATH"

|

||||

export CC=gcc

|

||||

export CXX=g++

|

||||

# Disable errors with Ninja

|

||||

export CXXFLAGS="-Wno-error=unused-command-line-argument"

|

||||

export CFLAGS="-Wno-error=unused-command-line-argument"

|

||||

cmake -GNinja -DVERBOSE_BUILD=ON -DCMAKE_BUILD_TYPE=$(BUILD_TYPE) -DENABLE_PYTHON=ON -DENABLE_TESTS=ON $(REPO_DIR)

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

displayName: 'CMake'

|

||||

- script: ninja

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

displayName: 'Build Mac'

|

||||

- script: ls -alR $(REPO_DIR)/bin/

|

||||

displayName: 'List files'

|

||||

- script: $(BIN_DIR)/unit-test --gtest_print_time=1 --gtest_filter=-backend_api.config_unsupported:*IE_GPU*:IE_CPU.onnx_model_sigmoid

|

||||

displayName: 'nGraph UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/InferenceEngineUnitTests --gtest_print_time=1

|

||||

displayName: 'IE UT old'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/ieUnitTests

|

||||

displayName: 'IE UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/cpuUnitTests

|

||||

displayName: 'CPU UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/vpuUnitTests

|

||||

displayName: 'VPU UT'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/ieFuncTests

|

||||

displayName: 'IE FuncTests'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/cpuFuncTests --gtest_print_time=1

|

||||

displayName: 'CPU FuncTests'

|

||||

continueOnError: false

|

||||

- script: $(BIN_DIR)/MklDnnBehaviorTests

|

||||

displayName: 'MklDnnBehaviorTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

git clone https://github.com/openvinotoolkit/testdata.git

|

||||

git clone https://github.com/google/gtest-parallel.git

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: 'Clone testdata & gtest-parallel'

|

||||

- script: |

|

||||

export DATA_PATH=$(WORK_DIR)/testdata

|

||||

export MODELS_PATH=$(WORK_DIR)/testdata

|

||||

python3 $(WORK_DIR)/gtest-parallel/gtest-parallel $(BIN_DIR)/MklDnnFunctionalTests --workers=$(WORKERS_NUMBER) --print_test_times --dump_json_test_results=MklDnnFunctionalTests.json --gtest_filter=-smoke_MobileNet/ModelTransformationsTest.LPT/mobilenet_v2_tf_depthwise_batch1_inPluginDisabled_inTestDisabled_asymmetric* -- --gtest_print_time=1

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: 'MklDnnFunctionalTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

export DATA_PATH=$(WORK_DIR)/testdata

|

||||

export MODELS_PATH=$(WORK_DIR)/testdata

|

||||

$(BIN_DIR)/InferenceEngineCAPITests

|

||||

displayName: 'IE CAPITests'

|

||||

continueOnError: false

|

||||

|

||||

- job: Win

|

||||

# About 150% of total time

|

||||

timeoutInMinutes: 120

|

||||

pool:

|

||||

name: WIN_VMSS_VENV_F8S_WU2

|

||||

variables:

|

||||

system.debug: true

|

||||

WORKERS_NUMBER: 8

|

||||

BUILD_TYPE: Release

|

||||

REPO_DIR: $(Build.Repository.LocalPath)

|

||||

WORK_DIR: $(Pipeline.Workspace)\_w

|

||||

BUILD_DIR: D:\build

|

||||

BIN_DIR: $(REPO_DIR)\bin\intel64

|

||||

MSVS_VARS_PATH: C:\Program Files (x86)\Microsoft Visual Studio\2019\Enterprise\VC\Auxiliary\Build\vcvars64.bat

|

||||

MSVC_COMPILER_PATH: C:\Program Files (x86)\Microsoft Visual Studio\2019\Enterprise\VC\Tools\MSVC\14.24.28314\bin\Hostx64\x64\cl.exe

|

||||

steps:

|

||||

- checkout: self

|

||||

clean: true

|

||||

fetchDepth: 1

|

||||

lfs: false

|

||||

submodules: recursive

|

||||

path: openvino

|

||||

- script: |

|

||||

powershell -command "Invoke-RestMethod -Headers @{\"Metadata\"=\"true\"} -Method GET -Uri http://169.254.169.254/metadata/instance/compute?api-version=2019-06-01 | format-custom"

|

||||

where python3

|

||||

where python

|

||||

python --version

|

||||

wmic computersystem get TotalPhysicalMemory

|

||||

wmic cpu list

|

||||

wmic logicaldisk get description,name

|

||||

wmic VOLUME list

|

||||

set

|

||||

displayName: 'System properties'

|

||||

- script: |

|

||||

rd /Q /S $(WORK_DIR) & mkdir $(WORK_DIR)

|

||||

rd /Q /S $(BUILD_DIR) & mkdir $(BUILD_DIR)

|

||||

displayName: 'Make dir'

|

||||

- script: |

|

||||

certutil -urlcache -split -f https://github.com/ninja-build/ninja/releases/download/v1.10.0/ninja-win.zip ninja-win.zip

|

||||

powershell -command "Expand-Archive -Force ninja-win.zip"

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: Install Ninja

|

||||

- script: |

|

||||

certutil -urlcache -split -f https://incredibuilddiag1wu2.blob.core.windows.net/incredibuild/IBSetupConsole_9_5_0.exe IBSetupConsole_9_5_0.exe

|

||||

call IBSetupConsole_9_5_0.exe /Install /Components=Agent,oneuse /Coordinator=11.1.0.4 /AGENT:OPENFIREWALL=ON /AGENT:AUTOSELECTPORTS=ON /ADDTOPATH=ON /AGENT:INSTALLADDINS=OFF

|

||||

workingDirectory: $(WORK_DIR)

|

||||

displayName: Install IncrediBuild

|

||||

- script: |

|

||||

echo Stop IncrediBuild_Agent && net stop IncrediBuild_Agent

|

||||

reg add HKEY_LOCAL_MACHINE\SOFTWARE\Wow6432Node\Xoreax\IncrediBuild\Builder /f /v LastEnabled /d 0 && echo Start IncrediBuild_Agent && net start IncrediBuild_Agent

|

||||

displayName: Start IncrediBuild

|

||||

- script: |

|

||||

set PATH=$(WORK_DIR)\ninja-win;%PATH%

|

||||

call "$(MSVS_VARS_PATH)" && cmake -GNinja -DCMAKE_BUILD_TYPE=$(BUILD_TYPE) -DENABLE_TESTS=ON -DCMAKE_C_COMPILER:PATH="$(MSVC_COMPILER_PATH)" -DCMAKE_CXX_COMPILER:PATH="$(MSVC_COMPILER_PATH)" $(REPO_DIR)

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

displayName: 'CMake'

|

||||

- script: |

|

||||

set PATH=$(WORK_DIR)\ninja-win;%PATH%

|

||||

call "$(MSVS_VARS_PATH)" && "C:\Program Files (x86)\IncrediBuild\BuildConsole.exe" /COMMAND="ninja" /MaxCPUS=40

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

displayName: 'Build Win'

|

||||

- script: echo Stop IncrediBuild_Agent && net stop IncrediBuild_Agent

|

||||

displayName: Stop IncrediBuild

|

||||

continueOnError: true

|

||||

- script: dir $(REPO_DIR)\bin\ /s /b

|

||||

displayName: 'List files'

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\unit-test --gtest_print_time=1 --gtest_filter=-backend_api.config_unsupported:*IE_GPU*

|

||||

displayName: 'nGraph UT'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\InferenceEngineUnitTests --gtest_print_time=1

|

||||

displayName: 'IE UT old'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\ieUnitTests

|

||||

displayName: 'IE UT'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\cpuUnitTests

|

||||

displayName: 'CPU UT'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\gnaUnitTests

|

||||

displayName: 'GNA UT'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\vpuUnitTests

|

||||

displayName: 'VPU UT'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\ieFuncTests

|

||||

displayName: 'IE FuncTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\cpuFuncTests --gtest_print_time=1

|

||||

displayName: 'CPU FuncTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;%PATH%

|

||||

$(BIN_DIR)\MklDnnBehaviorTests

|

||||

displayName: 'MklDnnBehaviorTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

git clone https://github.com/openvinotoolkit/testdata.git

|

||||

git clone https://github.com/google/gtest-parallel.git

|

||||

workingDirectory: $(BUILD_DIR)

|

||||

displayName: 'Clone testdata & gtest-parallel'

|

||||

# Add for gtest-parallel, it hangs now (CVS-33386)

|

||||

#python $(BUILD_DIR)\gtest-parallel\gtest-parallel $(BIN_DIR)\MklDnnFunctionalTests --workers=$(WORKERS_NUMBER) --print_test_times --dump_json_test_results=MklDnnFunctionalTests.json -- --gtest_print_time=1

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;$(REPO_DIR)\inference-engine\temp\opencv_4.3.0\opencv\bin;%PATH%

|

||||

set DATA_PATH=$(BUILD_DIR)\testdata

|

||||

set MODELS_PATH=$(BUILD_DIR)\testdata

|

||||

$(BIN_DIR)\MklDnnFunctionalTests --gtest_print_time=1

|

||||

displayName: 'MklDnnFunctionalTests'

|

||||

continueOnError: false

|

||||

- script: |

|

||||

set PATH=$(REPO_DIR)\inference-engine\temp\tbb\bin;$(REPO_DIR)\inference-engine\temp\opencv_4.3.0\opencv\bin;%PATH%

|

||||

set DATA_PATH=$(BUILD_DIR)\testdata

|

||||

set MODELS_PATH=$(BUILD_DIR)\testdata

|

||||

$(BIN_DIR)\InferenceEngineCAPITests

|

||||

displayName: 'IE CAPITests'

|

||||

continueOnError: false

|

||||

@@ -46,21 +46,19 @@ The open source version of Inference Engine includes the following plugins:

|

||||

| MYRIAD plugin | Intel® Movidius™ Neural Compute Stick powered by the Intel® Movidius™ Myriad™ 2, Intel® Neural Compute Stick 2 powered by the Intel® Movidius™ Myriad™ X |

|

||||

| Heterogeneous plugin | Heterogeneous plugin enables computing for inference on one network on several Intel® devices. |

|

||||

|

||||

Inference Engine plugin for Intel® FPGA is distributed only in a binary form,

|

||||

as a part of [Intel® Distribution of OpenVINO™].

|

||||

|

||||

## Build on Linux\* Systems

|

||||

|

||||

The software was validated on:

|

||||

- Ubuntu\* 18.04 (64-bit) with default GCC\* 7.5.0

|

||||

- Ubuntu\* 16.04 (64-bit) with default GCC\* 5.4.0

|

||||

- CentOS\* 7.4 (64-bit) with default GCC\* 4.8.5

|

||||

- Ubuntu\* 20.04 (64-bit) with default GCC\* 9.3.0

|

||||

- CentOS\* 7.6 (64-bit) with default GCC\* 4.8.5

|

||||

|

||||

### Software Requirements

|

||||

- [CMake]\* 3.11 or higher

|

||||

- [CMake]\* 3.13 or higher

|

||||

- GCC\* 4.8 or higher to build the Inference Engine

|

||||

- Python 3.5 or higher for Inference Engine Python API wrapper

|

||||

- Python 3.6 or higher for Inference Engine Python API wrapper

|

||||

- (Optional) [Install Intel® Graphics Compute Runtime for OpenCL™ Driver package 19.41.14441].

|

||||

> **NOTE**: Building samples and demos from the Intel® Distribution of OpenVINO™ toolkit package requires CMake\* 3.10 or higher.

|

||||

|

||||

### Build Steps

|

||||

1. Clone submodules:

|

||||

@@ -331,14 +329,14 @@ You can use the following additional build options:

|

||||

## Build on Windows* Systems

|

||||

|

||||

The software was validated on:

|

||||

- Microsoft\* Windows\* 10 (64-bit) with Visual Studio 2017 and Intel® C++

|

||||

Compiler 2018 Update 3

|

||||

- Microsoft\* Windows\* 10 (64-bit) with Visual Studio 2019

|

||||

|

||||

### Software Requirements

|

||||

- [CMake]\*3.11 or higher

|

||||

- Microsoft\* Visual Studio 2017, 2019 or [Intel® C++ Compiler] 18.0

|

||||

- [CMake]\*3.13 or higher

|

||||

- Microsoft\* Visual Studio 2017, 2019

|

||||

- (Optional) Intel® Graphics Driver for Windows* (26.20) [driver package].

|

||||

- Python 3.5 or higher for Inference Engine Python API wrapper

|

||||

- Python 3.6 or higher for Inference Engine Python API wrapper

|

||||

> **NOTE**: Building samples and demos from the Intel® Distribution of OpenVINO™ toolkit package requires CMake\* 3.10 or higher.

|

||||

|

||||

### Build Steps

|

||||

|

||||

@@ -369,20 +367,13 @@ cmake -G "Visual Studio 15 2017 Win64" -DCMAKE_BUILD_TYPE=Release ..

|

||||

cmake -G "Visual Studio 16 2019" -A x64 -DCMAKE_BUILD_TYPE=Release ..

|

||||

```

|

||||

|

||||

For Intel® C++ Compiler 18:

|

||||

```sh

|

||||

cmake -G "Visual Studio 15 2017 Win64" -T "Intel C++ Compiler 18.0" ^

|

||||

-DCMAKE_BUILD_TYPE=Release ^

|

||||

-DICCLIB="C:\Program Files (x86)\IntelSWTools\compilers_and_libraries_2018\windows\compiler\lib" ..

|

||||

```

|

||||

|

||||

5. Build generated solution in Visual Studio or run

|

||||

`cmake --build . --config Release` to build from the command line.

|

||||

|

||||

6. Before running the samples, add paths to the TBB and OpenCV binaries used for

|

||||

the build to the `%PATH%` environment variable. By default, TBB binaries are

|

||||

downloaded by the CMake-based script to the `<openvino_repo>/inference-engine/temp/tbb/bin`

|

||||

folder, OpenCV binaries to the `<openvino_repo>/inference-engine/temp/opencv_4.3.0/opencv/bin`

|

||||

folder, OpenCV binaries to the `<openvino_repo>/inference-engine/temp/opencv_4.5.0/opencv/bin`

|

||||

folder.

|

||||

|

||||

### Additional Build Options

|

||||

@@ -448,13 +439,14 @@ cmake --build . --config Release

|

||||

inference on Intel CPUs only.

|

||||

|

||||

The software was validated on:

|

||||

- macOS\* 10.14, 64-bit

|

||||

- macOS\* 10.15, 64-bit

|

||||

|

||||

### Software Requirements

|

||||

|

||||

- [CMake]\* 3.11 or higher

|

||||

- [CMake]\* 3.13 or higher

|

||||

- Clang\* compiler from Xcode\* 10.1 or higher

|

||||

- Python\* 3.5 or higher for the Inference Engine Python API wrapper

|

||||

- Python\* 3.6 or higher for the Inference Engine Python API wrapper

|

||||

> **NOTE**: Building samples and demos from the Intel® Distribution of OpenVINO™ toolkit package requires CMake\* 3.10 or higher.

|

||||

|

||||

### Build Steps

|

||||

|

||||

@@ -463,19 +455,11 @@ The software was validated on:

|

||||

cd openvino

|

||||

git submodule update --init --recursive

|

||||

```

|

||||

2. Install build dependencies using the `install_dependencies.sh` script in the

|

||||

project root folder:

|

||||

```sh

|

||||

chmod +x install_dependencies.sh

|

||||

```

|

||||

```sh

|

||||

./install_dependencies.sh

|

||||

```

|

||||

3. Create a build folder:

|

||||

2. Create a build folder:

|

||||

```sh

|

||||

mkdir build

|

||||

mkdir build && cd build

|

||||

```

|

||||

4. Inference Engine uses a CMake-based build system. In the created `build`

|

||||

3. Inference Engine uses a CMake-based build system. In the created `build`

|

||||

directory, run `cmake` to fetch project dependencies and create Unix makefiles,

|

||||

then run `make` to build the project:

|

||||

```sh

|

||||

@@ -511,12 +495,17 @@ You can use the following additional build options:

|

||||

|

||||

- To build the Python API wrapper, use the `-DENABLE_PYTHON=ON` option. To

|

||||

specify an exact Python version, use the following options:

|

||||

```sh

|

||||

-DPYTHON_EXECUTABLE=/Library/Frameworks/Python.framework/Versions/3.7/bin/python3.7 \

|

||||

-DPYTHON_LIBRARY=/Library/Frameworks/Python.framework/Versions/3.7/lib/libpython3.7m.dylib \

|

||||

-DPYTHON_INCLUDE_DIR=/Library/Frameworks/Python.framework/Versions/3.7/include/python3.7m

|

||||

```

|

||||

|

||||

- If you installed Python through Homebrew*, set the following flags:

|

||||

```sh

|

||||

-DPYTHON_EXECUTABLE=/usr/local/Cellar/python/3.7.7/Frameworks/Python.framework/Versions/3.7/bin/python3.7m \

|

||||

-DPYTHON_LIBRARY=/usr/local/Cellar/python/3.7.7/Frameworks/Python.framework/Versions/3.7/lib/libpython3.7m.dylib \

|

||||

-DPYTHON_INCLUDE_DIR=/usr/local/Cellar/python/3.7.7/Frameworks/Python.framework/Versions/3.7/include/python3.7m

|

||||

```

|

||||

- If you installed Python another way, you can use the following commands to find where the `dylib` and `include_dir` are located, respectively:

|

||||

```sh

|

||||

find /usr/ -name 'libpython*m.dylib'

|

||||

find /usr/ -type d -name python3.7m

|

||||

```

|

||||

- nGraph-specific compilation options:

|

||||

`-DNGRAPH_ONNX_IMPORT_ENABLE=ON` enables the building of the nGraph ONNX importer.

|

||||

`-DNGRAPH_DEBUG_ENABLE=ON` enables additional debug prints.

|

||||

@@ -527,8 +516,9 @@ This section describes how to build Inference Engine for Android x86 (64-bit) op

|

||||

|

||||

### Software Requirements

|

||||

|

||||

- [CMake]\* 3.11 or higher

|

||||

- [CMake]\* 3.13 or higher

|

||||

- Android NDK (this guide has been validated with r20 release)

|

||||

> **NOTE**: Building samples and demos from the Intel® Distribution of OpenVINO™ toolkit package requires CMake\* 3.10 or higher.

|

||||

|

||||

### Build Steps

|

||||

|

||||

@@ -698,5 +688,4 @@ This target collects all dependencies, prepares the nGraph package and copies it

|

||||

[build instructions]:https://docs.opencv.org/master/df/d65/tutorial_table_of_content_introduction.html

|

||||

[driver package]:https://downloadcenter.intel.com/download/29335/Intel-Graphics-Windows-10-DCH-Drivers

|

||||

[Intel® Neural Compute Stick 2 Get Started]:https://software.intel.com/en-us/neural-compute-stick/get-started

|

||||

[Intel® C++ Compiler]:https://software.intel.com/en-us/intel-parallel-studio-xe

|

||||

[OpenBLAS]:https://sourceforge.net/projects/openblas/files/v0.2.14/OpenBLAS-v0.2.14-Win64-int64.zip/download

|

||||

|

||||

@@ -45,3 +45,6 @@ ie_dependent_option (ENABLE_AVX2 "Enable AVX2 optimizations" ON "X86_64 OR X86"

|

||||

ie_dependent_option (ENABLE_AVX512F "Enable AVX512 optimizations" ON "X86_64 OR X86" OFF)

|

||||

|

||||

ie_dependent_option (ENABLE_PROFILING_ITT "ITT tracing of IE and plugins internals" ON "NOT CMAKE_CROSSCOMPILING" OFF)

|

||||

|

||||

# Documentation build

|

||||

ie_option (ENABLE_DOCS "build docs using Doxygen" OFF)

|

||||

|

||||

@@ -2,59 +2,187 @@

|

||||

# SPDX-License-Identifier: Apache-2.0

|

||||

#

|

||||

|

||||

add_subdirectory(examples)

|

||||

if(NOT ENABLE_DOCKER)

|

||||

add_subdirectory(examples)

|

||||

|

||||

# Detect nGraph

|

||||

find_package(ngraph QUIET)

|

||||

if(NOT ngraph_FOUND)

|

||||

set(ngraph_DIR ${CMAKE_BINARY_DIR}/ngraph)

|

||||

endif()

|

||||

|

||||

# Detect InferenceEngine

|

||||

find_package(InferenceEngine QUIET)

|

||||

if(NOT InferenceEngine_FOUND)

|

||||

set(InferenceEngine_DIR ${CMAKE_BINARY_DIR})

|

||||

endif()

|

||||

|

||||

add_subdirectory(template_extension)

|

||||

|

||||

set(all_docs_targets

|

||||

ie_docs_examples

|

||||

template_extension

|

||||

templatePlugin TemplateBehaviorTests TemplateFunctionalTests)

|

||||

foreach(target_name IN LISTS all_docs_targets)

|

||||

if (TARGET ${target_name})

|

||||

set_target_properties(${target_name} PROPERTIES FOLDER docs)

|

||||

# Detect nGraph

|

||||

find_package(ngraph QUIET)

|

||||

if(NOT ngraph_FOUND)

|

||||

set(ngraph_DIR ${CMAKE_BINARY_DIR}/ngraph)

|

||||

endif()

|

||||

endforeach()

|

||||

|

||||

# OpenVINO docs

|

||||

# Detect InferenceEngine

|

||||

find_package(InferenceEngine QUIET)

|

||||

if(NOT InferenceEngine_FOUND)

|

||||

set(InferenceEngine_DIR ${CMAKE_BINARY_DIR})

|

||||

endif()

|

||||

|

||||

set(OPENVINO_DOCS_PATH "" CACHE PATH "Path to openvino-documentation local repository")

|

||||

set(args "")

|

||||

add_subdirectory(template_extension)

|

||||

|

||||

if(OPENVINO_DOCS_PATH)

|

||||

set(args "${args} ovinodoc_path:${OPENVINO_DOCS_PATH}")

|

||||

set(all_docs_targets

|

||||

ie_docs_examples

|

||||

template_extension

|

||||

templatePlugin TemplateBehaviorTests TemplateFunctionalTests)

|

||||

foreach(target_name IN LISTS all_docs_targets)

|

||||

if (TARGET ${target_name})

|

||||

set_target_properties(${target_name} PROPERTIES FOLDER docs)

|

||||

endif()

|

||||

endforeach()

|

||||

endif()

|

||||

|

||||

file(GLOB_RECURSE docs_files "${OpenVINO_MAIN_SOURCE_DIR}/docs")

|

||||

file(GLOB_RECURSE include_files "${OpenVINO_MAIN_SOURCE_DIR}/inference-engine/include")

|

||||

file(GLOB_RECURSE ovino_files "${OPENVINO_DOCS_PATH}")

|

||||

function(build_docs)

|

||||

find_package(Doxygen REQUIRED dot)

|

||||

find_package(Python3 COMPONENTS Interpreter)

|

||||

find_package(LATEX)

|

||||

|

||||

add_custom_target(ie_docs

|

||||

COMMAND ./build_docs.sh ${args}

|

||||

WORKING_DIRECTORY "${OpenVINO_MAIN_SOURCE_DIR}/docs/build_documentation"

|

||||

COMMENT "Generating OpenVINO documentation"

|

||||

SOURCES ${docs_files} ${include_files} ${ovino_files}

|

||||

VERBATIM)

|

||||

set_target_properties(ie_docs PROPERTIES FOLDER docs)

|

||||

if(NOT DOXYGEN_FOUND)

|

||||

message(FATAL_ERROR "Doxygen is required to build the documentation")

|

||||

endif()

|

||||

|

||||

find_program(browser NAMES xdg-open)

|

||||

if(browser)

|

||||

add_custom_target(ie_docs_open

|

||||

COMMAND ${browser} "${OpenVINO_MAIN_SOURCE_DIR}/doc/html/index.html"

|

||||

DEPENDS ie_docs

|

||||

COMMENT "Open OpenVINO documentation"

|

||||

if(NOT Python3_FOUND)

|

||||

message(FATAL_ERROR "Python3 is required to build the documentation")

|

||||

endif()

|

||||

|

||||

if(NOT LATEX_FOUND)

|

||||

message(FATAL_ERROR "LATEX is required to build the documentation")

|

||||

endif()

|

||||

|

||||

set(DOCS_BINARY_DIR "${CMAKE_CURRENT_BINARY_DIR}")

|

||||

set(DOXYGEN_DIR "${OpenVINO_MAIN_SOURCE_DIR}/docs/doxygen")

|

||||

set(IE_SOURCE_DIR "${OpenVINO_MAIN_SOURCE_DIR}/inference-engine")

|

||||

set(PYTHON_API_IN "${IE_SOURCE_DIR}/ie_bridges/python/src/openvino/inference_engine/ie_api.pyx")

|

||||

set(PYTHON_API_OUT "${DOCS_BINARY_DIR}/python_api/ie_api.pyx")

|

||||

set(C_API "${IE_SOURCE_DIR}/ie_bridges/c/include")

|

||||

set(PLUGIN_API_DIR "${DOCS_BINARY_DIR}/IE_PLUGIN_DG")

|

||||

|

||||

# Preprocessing scripts

|

||||

set(DOXY_MD_FILTER "${DOXYGEN_DIR}/doxy_md_filter.py")

|

||||

set(PYX_FILTER "${DOXYGEN_DIR}/pyx_filter.py")

|

||||

|

||||

file(GLOB_RECURSE doc_source_files

|

||||

LIST_DIRECTORIES true RELATIVE ${OpenVINO_MAIN_SOURCE_DIR}

|

||||

"${OpenVINO_MAIN_SOURCE_DIR}/docs/*.md"

|

||||

"${OpenVINO_MAIN_SOURCE_DIR}/docs/*.png"

|

||||

"${OpenVINO_MAIN_SOURCE_DIR}/docs/*.gif"

|

||||

"${OpenVINO_MAIN_SOURCE_DIR}/docs/*.jpg"

|

||||

"${OpenVINO_MAIN_SOURCE_DIR}/inference-engine/*.md"

|

||||

"${OpenVINO_MAIN_SOURCE_DIR}/inference-engine/*.png"

|

||||

"${OpenVINO_MAIN_SOURCE_DIR}/inference-engine/*.gif"

|

||||

"${OpenVINO_MAIN_SOURCE_DIR}/inference-engine/*.jpg")

|

||||

|

||||

configure_file(${PYTHON_API_IN} ${PYTHON_API_OUT} @ONLY)

|

||||

|

||||

set(IE_CONFIG_SOURCE "${DOXYGEN_DIR}/ie_docs.config")

|

||||

set(C_CONFIG_SOURCE "${DOXYGEN_DIR}/ie_c_api.config")

|

||||

set(PY_CONFIG_SOURCE "${DOXYGEN_DIR}/ie_py_api.config")

|

||||

set(PLUGIN_CONFIG_SOURCE "${DOXYGEN_DIR}/ie_plugin_api.config")

|

||||

|

||||

set(IE_CONFIG_BINARY "${DOCS_BINARY_DIR}/ie_docs.config")

|

||||

set(C_CONFIG_BINARY "${DOCS_BINARY_DIR}/ie_c_api.config")

|

||||

set(PY_CONFIG_BINARY "${DOCS_BINARY_DIR}/ie_py_api.config")

|

||||

set(PLUGIN_CONFIG_BINARY "${DOCS_BINARY_DIR}/ie_plugin_api.config")

|

||||

|

||||

set(IE_LAYOUT_SOURCE "${DOXYGEN_DIR}/ie_docs.xml")

|

||||

set(C_LAYOUT_SOURCE "${DOXYGEN_DIR}/ie_c_api.xml")

|

||||

set(PY_LAYOUT_SOURCE "${DOXYGEN_DIR}/ie_py_api.xml")

|

||||

set(PLUGIN_LAYOUT_SOURCE "${DOXYGEN_DIR}/ie_plugin_api.xml")

|

||||

|

||||

set(IE_LAYOUT_BINARY "${DOCS_BINARY_DIR}/ie_docs.xml")

|

||||

set(C_LAYOUT_BINARY "${DOCS_BINARY_DIR}/ie_c_api.xml")

|

||||

set(PY_LAYOUT_BINARY "${DOCS_BINARY_DIR}/ie_py_api.xml")

|

||||

set(PLUGIN_LAYOUT_BINARY "${DOCS_BINARY_DIR}/ie_plugin_api.xml")

|

||||

|

||||

# Tables of contents

|

||||

configure_file(${IE_LAYOUT_SOURCE} ${IE_LAYOUT_BINARY} @ONLY)

|

||||

configure_file(${C_LAYOUT_SOURCE} ${C_LAYOUT_BINARY} @ONLY)

|

||||

configure_file(${PY_LAYOUT_SOURCE} ${PY_LAYOUT_BINARY} @ONLY)

|

||||

configure_file(${PLUGIN_LAYOUT_SOURCE} ${PLUGIN_LAYOUT_BINARY} @ONLY)

|

||||

|

||||

# Doxygen config files

|

||||

configure_file(${IE_CONFIG_SOURCE} ${IE_CONFIG_BINARY} @ONLY)

|

||||

configure_file(${C_CONFIG_SOURCE} ${C_CONFIG_BINARY} @ONLY)

|

||||

configure_file(${PY_CONFIG_SOURCE} ${PY_CONFIG_BINARY} @ONLY)

|

||||

configure_file(${PLUGIN_CONFIG_SOURCE} ${PLUGIN_CONFIG_BINARY} @ONLY)

|

||||

|

||||

# Preprocessing scripts

|

||||

set(DOXY_MD_FILTER "${DOXYGEN_DIR}/doxy_md_filter.py")

|

||||

set(PYX_FILTER "${DOXYGEN_DIR}/pyx_filter.py")

|

||||

|

||||

# C API

|

||||

|

||||

add_custom_target(c_api

|

||||

COMMAND ${DOXYGEN_EXECUTABLE} ${C_CONFIG_BINARY}

|

||||

WORKING_DIRECTORY ${DOCS_BINARY_DIR}

|

||||

COMMENT "Generating C API Reference"

|

||||

VERBATIM)

|

||||

set_target_properties(ie_docs_open PROPERTIES FOLDER docs)

|

||||

|

||||

# Python API

|

||||

|

||||

add_custom_target(py_api

|

||||

COMMAND ${DOXYGEN_EXECUTABLE} ${PY_CONFIG_BINARY}

|

||||

WORKING_DIRECTORY ${DOCS_BINARY_DIR}

|

||||

COMMENT "Generating Python API Reference"

|

||||

VERBATIM)

|

||||

|

||||

add_custom_command(TARGET py_api

|

||||

PRE_BUILD

|

||||

COMMAND ${Python3_EXECUTABLE} ${PYX_FILTER} ${PYTHON_API_OUT}

|

||||

COMMENT "Pre-process Python API")

|

||||

|

||||

# Plugin API

|

||||

|

||||

add_custom_target(plugin_api

|

||||

COMMAND ${DOXYGEN_EXECUTABLE} ${PLUGIN_CONFIG_BINARY}

|

||||

WORKING_DIRECTORY ${DOCS_BINARY_DIR}

|

||||

COMMENT "Generating Plugin API Reference"

|

||||

VERBATIM)

|

||||

|

||||

# Preprocess docs

|

||||

|

||||

add_custom_target(preprocess_docs

|

||||

COMMENT "Pre-process docs"

|

||||

VERBATIM)

|

||||

|

||||

foreach(source_file ${doc_source_files})

|

||||

list(APPEND commands COMMAND ${CMAKE_COMMAND} -E copy

|

||||

"${OpenVINO_MAIN_SOURCE_DIR}/${source_file}" "${DOCS_BINARY_DIR}/${source_file}")

|

||||

endforeach()

|

||||

|

||||

add_custom_command(TARGET preprocess_docs

|

||||

PRE_BUILD

|

||||

${commands}

|

||||

COMMAND ${Python3_EXECUTABLE} ${DOXY_MD_FILTER} ${DOCS_BINARY_DIR}

|

||||

COMMENT "Pre-process markdown and image links")

|

||||

|

||||

# IE dev guide and C++ API

|

||||

|

||||

add_custom_target(ie_docs

|

||||

DEPENDS preprocess_docs

|

||||

COMMAND ${DOXYGEN_EXECUTABLE} ${IE_CONFIG_BINARY}

|

||||

WORKING_DIRECTORY ${DOCS_BINARY_DIR}

|

||||

VERBATIM)

|

||||

|

||||

# Umbrella OpenVINO target

|

||||

|

||||

add_custom_target(openvino_docs

|

||||

DEPENDS c_api py_api ie_docs plugin_api

|

||||

COMMENT "Generating OpenVINO documentation"

|

||||

VERBATIM)

|

||||

|

||||

set_target_properties(openvino_docs ie_docs c_api py_api preprocess_docs plugin_api

|

||||

PROPERTIES FOLDER docs)

|

||||

|

||||

find_program(browser NAMES xdg-open)

|

||||

if(browser)

|

||||

add_custom_target(ie_docs_open

|

||||

COMMAND ${browser} "${OpenVINO_MAIN_SOURCE_DIR}/docs/html/index.html"

|

||||

DEPENDS ie_docs

|

||||

COMMENT "Open OpenVINO documentation"

|

||||

VERBATIM)

|

||||

set_target_properties(ie_docs_open PROPERTIES FOLDER docs)

|

||||

endif()

|

||||

endfunction()

|

||||

|

||||

if(ENABLE_DOCS)

|

||||

build_docs()

|

||||

endif()

|

||||

|

||||

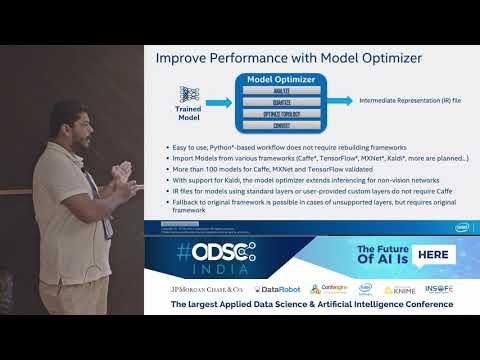

@@ -21,11 +21,11 @@ The original format will be a supported framework such as TensorFlow, Caffe, or

|

||||

|

||||

## Custom Layer Overview

|

||||

|

||||

The [Model Optimizer](https://docs.openvinotoolkit.org/2019_R1.1/_docs_MO_DG_Deep_Learning_Model_Optimizer_DevGuide.html) searches the list of known layers for each layer contained in the input model topology before building the model's internal representation, optimizing the model, and producing the Intermediate Representation files.

|

||||

The [Model Optimizer](../MO_DG/Deep_Learning_Model_Optimizer_DevGuide.md) searches the list of known layers for each layer contained in the input model topology before building the model's internal representation, optimizing the model, and producing the Intermediate Representation files.

|

||||

|

||||

The [Inference Engine](https://docs.openvinotoolkit.org/2019_R1.1/_docs_IE_DG_Deep_Learning_Inference_Engine_DevGuide.html) loads the layers from the input model IR files into the specified device plugin, which will search a list of known layer implementations for the device. If your topology contains layers that are not in the list of known layers for the device, the Inference Engine considers the layer to be unsupported and reports an error. To see the layers that are supported by each device plugin for the Inference Engine, refer to the [Supported Devices](https://docs.openvinotoolkit.org/2019_R1.1/_docs_IE_DG_supported_plugins_Supported_Devices.html) documentation.

|

||||

The [Inference Engine](../IE_DG/Deep_Learning_Inference_Engine_DevGuide.md) loads the layers from the input model IR files into the specified device plugin, which will search a list of known layer implementations for the device. If your topology contains layers that are not in the list of known layers for the device, the Inference Engine considers the layer to be unsupported and reports an error. To see the layers that are supported by each device plugin for the Inference Engine, refer to the [Supported Devices](../IE_DG/supported_plugins/Supported_Devices.md) documentation.

|

||||

<br>

|

||||

**Note:** If a device doesn't support a particular layer, an alternative to creating a new custom layer is to target an additional device using the HETERO plugin. The [Heterogeneous Plugin](https://docs.openvinotoolkit.org/2019_R1.1/_docs_IE_DG_supported_plugins_HETERO.html) may be used to run an inference model on multiple devices allowing the unsupported layers on one device to "fallback" to run on another device (e.g., CPU) that does support those layers.

|

||||

> **NOTE:** If a device doesn't support a particular layer, an alternative to creating a new custom layer is to target an additional device using the HETERO plugin. The [Heterogeneous Plugin](../IE_DG/supported_plugins/HETERO.md) may be used to run an inference model on multiple devices allowing the unsupported layers on one device to "fallback" to run on another device (e.g., CPU) that does support those layers.

|

||||

|

||||

## Custom Layer Implementation Workflow

|

||||

|

||||

@@ -40,7 +40,7 @@ The following figure shows the basic processing steps for the Model Optimizer hi

|

||||

|

||||

The Model Optimizer first extracts information from the input model which includes the topology of the model layers along with parameters, input and output format, etc., for each layer. The model is then optimized from the various known characteristics of the layers, interconnects, and data flow which partly comes from the layer operation providing details including the shape of the output for each layer. Finally, the optimized model is output to the model IR files needed by the Inference Engine to run the model.

|

||||

|

||||

The Model Optimizer starts with a library of known extractors and operations for each [supported model framework](https://docs.openvinotoolkit.org/2019_R1.1/_docs_MO_DG_prepare_model_Supported_Frameworks_Layers.html) which must be extended to use each unknown custom layer. The custom layer extensions needed by the Model Optimizer are:

|

||||

The Model Optimizer starts with a library of known extractors and operations for each [supported model framework](../MO_DG/prepare_model/Supported_Frameworks_Layers.md) which must be extended to use each unknown custom layer. The custom layer extensions needed by the Model Optimizer are:

|

||||

|

||||

- Custom Layer Extractor

|

||||

- Responsible for identifying the custom layer operation and extracting the parameters for each instance of the custom layer. The layer parameters are stored per instance and used by the layer operation before finally appearing in the output IR. Typically the input layer parameters are unchanged, which is the case covered by this tutorial.

|

||||

@@ -182,10 +182,10 @@ There are two options to convert your MXNet* model that contains custom layers:

|

||||

2. If you have sub-graphs that should not be expressed with the analogous sub-graph in the Intermediate Representation, but another sub-graph should appear in the model, the Model Optimizer provides such an option. In MXNet the function is actively used for ssd models provides an opportunity to for the necessary subgraph sequences and replace them. To read more, see [Sub-graph Replacement in the Model Optimizer](../MO_DG/prepare_model/customize_model_optimizer/Subgraph_Replacement_Model_Optimizer.md).

|

||||

|

||||

## Kaldi\* Models with Custom Layers <a name="Kaldi-models-with-custom-layers"></a>

|

||||